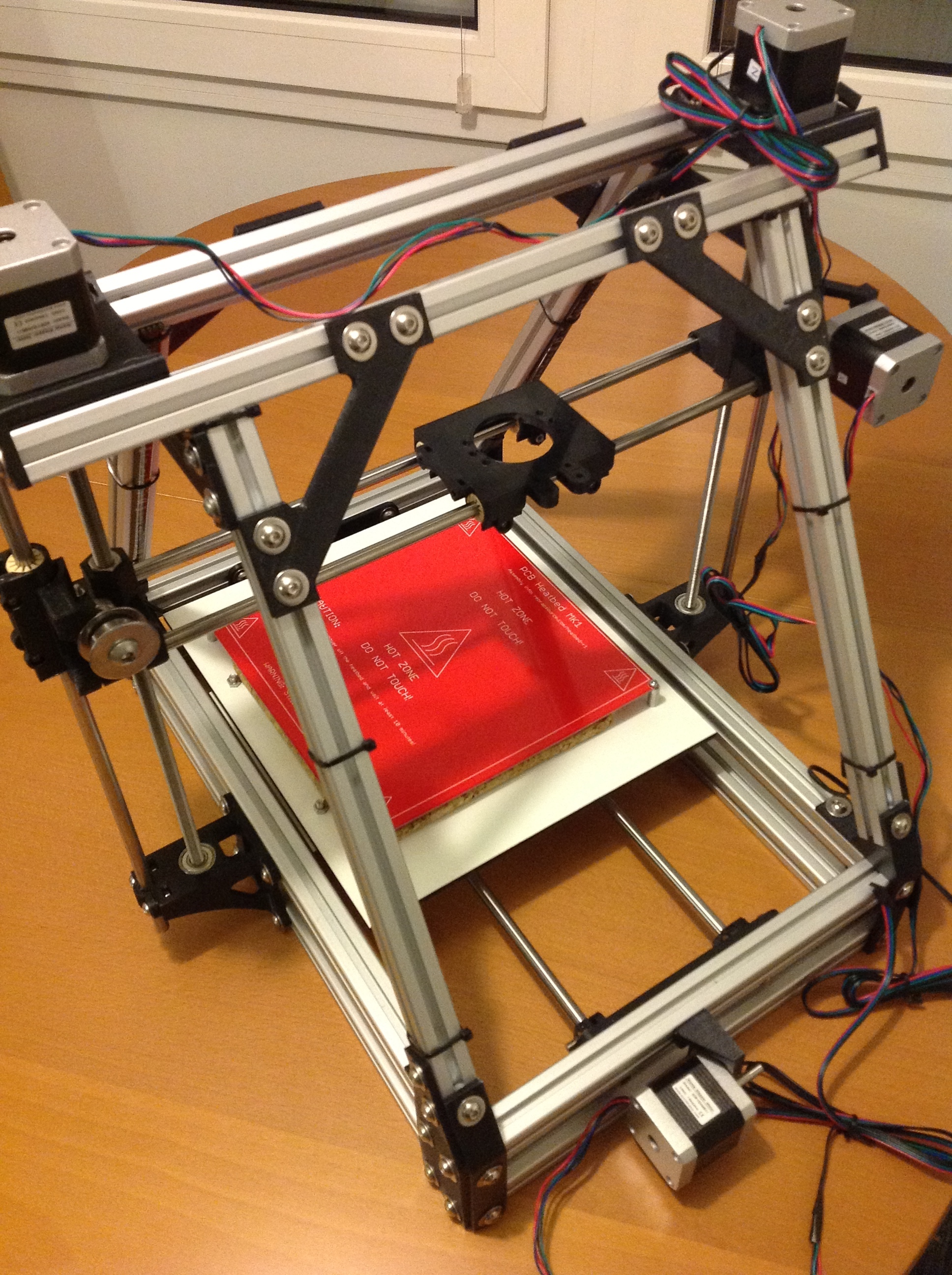

My Mendelmax 3d printer has come to age and I’m not anymore satisfied with the format and design, so when I found some 50% discount on makeblock parts at my favourite tech store EXP Tech, I decided to start a new 3d printer design from ground up using makeblock components.

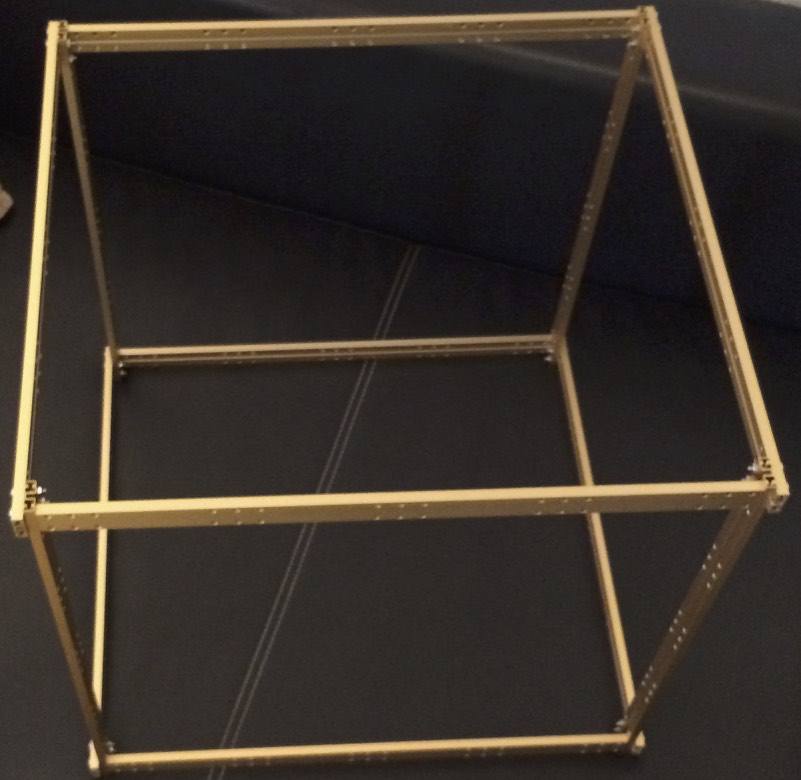

I’m not yet sure which basic hardware principle the 3d printer will finally have (CoreXY vs traditional 3 axes, but definitely not a delta system), using v rail sliders vs linear motion guides or traditional round motion shafts.

I bought so many makeblock parts that I might be able to test out any of these concepts.

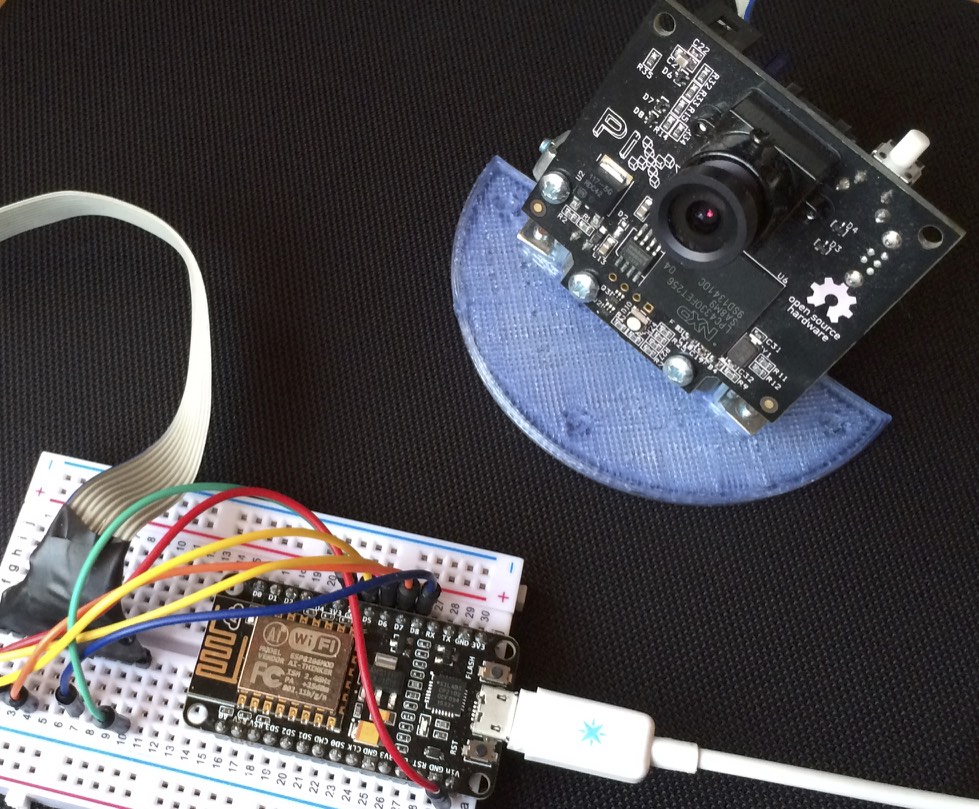

Further I was able to get a milling spindle and a capacitive distance sensor, and maybe I’ll add a laser engraving option later on.

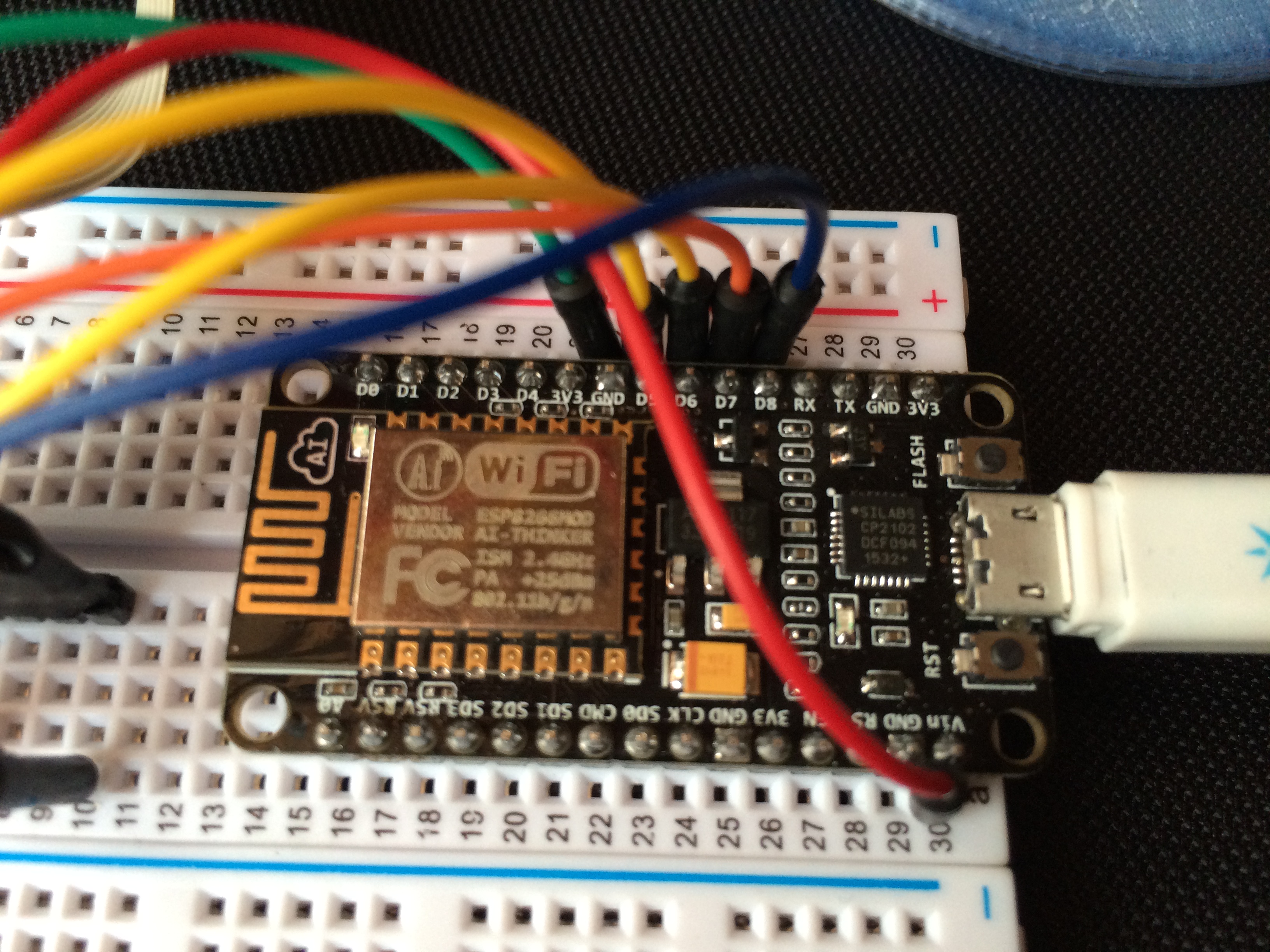

Also replacing the electronics RAMPS and arduino with more capable stepper drivers and 32bit microcontroller with floating point support might change the way my old Mendelmax used to print things, allowing bigger speeds and more smooth and exact positioning than before.

We will see, lots of stuff to try out in my holiday.